Configuring the Director

Of all the configuration files needed to run Bacula, the Director's is the most complicated, and the one that you will need to modify the most often as you add clients or modify the FileSets.

For a general discussion of configuration files and resources including the data types recognized by Bacula, please see the Configuration chapter of this manual.

Director Resource Types

Director resource types may be the following:

Job, JobDefs, Client, Storage/Autochanger, Catalog, Schedule, FileSet, Pool, Director, Console, Counter, and Messages. We present them here in the most logical order for defining them.

Note, everything revolves around a job and is tied to a job in one way or another.

- Director - to define the Director's name and its access password used for authenticating the Console program. Only a single Director resource definition may appear in the Director's configuration file. If you have either /dev/random or bc on your machine, Bacula will generate a random password during the configuration process, otherwise it will be left blank.

- Job - to define the backup/restore Jobs and to tie together the Client, FileSet and Schedule resources to be used for each Job. Normally, you will have Jobs of different names corresponding to each client (i.e., one Job per client, but a different one with a different name for each client).

- JobDefs - optional resource for providing defaults for Job resources.

- Schedule - to define when a Job is to be automatically run by Bacula's internal scheduler. You may have any number of Schedules, but each job will reference only one.

- FileSet - to define the set of files to be backed up with each job. You may have any number of FileSets but each Job will reference only one.

- Client - to define what Client is to be backed up. You will generally have multiple Client definitions. Each Job will reference only a single client.

- Storage (or Autochanger) - to define on what physical device the Volumes should be mounted. You may have one or more Storage definitions.

- Pool - to define the pool of Volumes that can be used for a particular Job.If there is a large number of clients or volumes, you may want to have multiple Pools. Pools allow you to restrict a Job (or a Client) to use only a particular set of Volumes.

- Catalog - to define in what database to keep the list of files and the Volume names where they are backed up. Most people only use a single catalog. However, if you want to scale the Director to many clients, multiple catalogs can be helpful. Multiple catalogs require a bit more management because in general you must know what catalog contains what data. Currently, all Pools are defined in each catalog. This restriction will be removed in a later release.

- Console - to define how the administrator or user can interact with the Director.

- Counter - to define a counter variable that can be accessed by variable expansion used for creating Volume labels.

- Messages - to define where error and information messages are to be sent or logged. You may define multiple different message resources and hence direct particular classes of messages to different users or locations (files, ...).

The Director Resource

The Director resource defines the attributes of the Directors running on the network. In the current implementation, there is only a single Director resource, but a future design may contain multiple Directors to maintain index and media database redundancy.

- Director

- Start of the Director resource. One and only one director resource must be supplied.

- Name = <name>

- The director name used by the system administrator. This directive is required.

- Description = <text>

- The text field contains a description of the Director that will be displayed in the graphical user interface. This directive is optional.

- Password = <UA-password>

- Specifies the password that must be supplied for the default Bacula Console to be authorized. The same password must appear in the Director resource of the Console configuration file. For added security, the password is never passed across the network but instead a challenge response hash code created from the password. This directive is required. If you have either /dev/random or bc on your machine, Bacula will generate a random password during the configuration process, otherwise it will be left blank and you must manually supply it.

The password is plain text. It is not generated through any special process but as noted above, it is better to use random text for security reasons.

- Messages = <Messages-resource-name>

- The messages resource specifies where to deliver Director messages that are not associated with a specific Job. Most messages are specific to a job and will be directed to the Messages resource specified by the job. However, there are a few messages that can occur when no job is running. This directive is required.

- Working Directory = <Directory>

- This directive is mandatory and specifies a directory in which the Director may put its status files. This directory should be used only by Bacula but may be shared by other Bacula daemons. However, please note, if this directory is shared with other Bacula daemons (the File daemon and Storage daemon), you must ensure that the Name given to each daemon is unique so that the temporary filenames used do not collide. By default the Bacula configure process creates unique daemon names by postfixing them with -dir, -fd, and -sd. Standard shell expansion of the Working Directory is done when the configuration file is read so that values such as $HOME will be properly expanded. This directive is required.

The working directory specified must already exist and be readable and writable by the Bacula daemon referencing it.

If you have specified a Director user and/or a Director group on your ./configure line with -with-dir-user and/or -with-dir-group the Working Directory owner and group will be set to those values.

- Pid Directory = <Directory>

- This directive is mandatory and specifies a directory in which the Director may put its process Id file. The process Id file is used to shutdown Bacula and to prevent multiple copies of Bacula from running simultaneously. Standard shell expansion of the Pid Directory is done when the configuration file is read so that values such as $HOME will be properly expanded.

The PID directory specified must already exist and be readable and writable by the Bacula daemon referencing it

Typically on Linux systems, you will set this to: /var/run. If you are not installing Bacula in the system directories, you can use the Working Directory as defined above. This directive is required.

- Scripts Directory = <Directory>

- This directive is optional and, if defined, specifies a directory in which the Director and the Storage daemon will look for many of the scripts that it needs to use during particular operations such as starting/stopping, the mtx-changer script, tape alerts, as well as catalog updates. This directory may be shared by other Bacula daemons. Standard shell expansion of the directory is done when the configuration file is read so that values such as $HOME will be properly expanded.

- QueryFile = <Path>

- This directive is mandatory and specifies a directory and file in which the Director can find the canned SQL statements for the query command of the Console. Standard shell expansion of the <Path> is done when the configuration file is read so that values such as $HOME will be properly expanded. This directive is required.

- Heartbeat Interval = <time-interval>

- This directive is optional and if specified will cause the Director to set a keepalive interval (heartbeat) in seconds on each of the sockets it opens for the Client resource. This value will override any specified at the Director level. It is implemented only on systems (Linux, ...) that provide the setsockopt TCP_KEEPIDLE function. The default value is 300s.

- Maximum Concurrent Jobs = <number>

- where <number> is the maximum number of total Director Jobs that should run concurrently. The default is set to 20, but you may set it to a larger number. Every valid connection to any daemon (Director, File daemon, or Storage daemon) results in a Job. This includes connections from Bacula Console. Thus the number of concurrent Jobs must, in general, be greater than the maximum number of Jobs that you wish to actually run.

In general, increasing the number of Concurrent Jobs increases the total throughtput of Bacula, because the simultaneous Jobs can all feed data to the Storage daemon and to the Catalog at the same time. However, keep in mind, that the Volume format becomes more complicated with multiple simultaneous jobs, consequently, restores may take longer if Bacula must sort through interleaved volume blocks from multiple simultaneous jobs. Though not normally necessary, this can be avoided by having each simultaneous job write to a different volume or by using data spooling, which will first spool the data to disk simultaneously, then write one spool file at a time to the volume thus avoiding excessive interleaving of the different job blocks.

- FD Connect Timeout = <time>

- where <time> is the time that the Director should continue attempting to contact the File daemon to start a job, and after which the Director will cancel the job. The default is 3 minutes.

- SD Connect Timeout = <time>

- where <time> is the time that the Director should continue attempting to contact the Storage daemon to start a job, and after which the Director will cancel the job. The default is 30 minutes.

- DirAddresses = <IP-address-specification>

- Specify the ports and addresses on which the Director daemon will listen for Bacula Console connections. Probably the simplest way to explain this is to show an example:

DirAddresses = { ip = { addr = 1.2.3.4; port = 1205;} ipv4 = { addr = 1.2.3.4; port = http; } ipv6 = { addr = 1.2.3.4; port = 1205; } ip = { addr = 1.2.3.4 port = 1205 } ip = { addr = 1.2.3.4 } ip = { addr = 201:220:222::2 } ip = { addr = bluedot.thun.net } }where ip, ip4, ip6, addr, and port are all keywords. Note, that the address can be specified as either a dotted quadruple, or IPv6 colon notation, or as a symbolic name (only in the ip specification). Also, port can be specified as a number or as the mnemonic value from the /etc/services file. If a port is not specified, the default will be used. If an ip section is specified, the resolution can be made either by IPv4 or IPv6. If ip4 is specified, then only IPv4 resolutions will be permitted, and likewise with ip6.

Please note that if you use the DirAddresses directive, you must not use either a DirPort or a DirAddress directive in the same resource.

- DirPort = <port-number>

- Specify the port (a positive integer) on which the Director daemon will listen for Bacula Console connections. This same port number must be specified in the Director resource of the Console configuration file. The default is 9101, so normally this directive need not be specified. This directive should not be used if you specify the DirAddresses (plural) directive.

- DirAddress = <IP-Address>

- This directive is optional, but if it is specified, it will cause the Director server (for the Console program) to bind to the specified <IP-Address>, which is either a domain name or an IP address specified as a dotted quadruple in string or quoted string format. If this directive is not specified, the Director will bind to any available address (the default). Note, unlike the DirAddresses specification noted above, this directive only permits a single address to be specified. This directive should not be used if you specify a DirAddresses (plural) directive.

- DirSourceAddress = <IP-Address>

- This record is optional, and if it is specified, it will cause the Director server (when initiating connections to a storage or file daemon) to source its connections from the specified address. Only a single IP address may be specified. If this record is not specified, the Director server will source its outgoing connections according to the system routing table (the default).

- Events Retention = <time>

-

The Events Retention directive defines the length of time that Bacula will keep events records in the Catalog database. When this time period expires, and if the user runs the prune events command, Bacula will prune (remove) Events records that are older than the specified period.

See the Configuration chapter of this manual for additional details of time specifications.

The default is 1 month.

- Statistics Retention = <time>

-

The Statistics Retention directive defines the length of time that Bacula will keep statistics job records in the Catalog database after the Job End time. (In JobHistory table) When this time period expires, and if the user runs the prune stats command, Bacula will prune (remove) Job records that are older than the specified period.

Theses statistics records aren't used for restore purpose, but mainly for capacity planning, billings, etc. See Statistics chapter for additional information.

See the Configuration chapter of this manual for additional details of time specifications.

- AutoPrune = <yes|no>

-

Normally, pruning of Files from the Catalog is specified on a Client by Client basis in the Client resource with the AutoPrune directive. It is also possible to overwrite the Client settings in the Pool resource used by jobs, with the AutoPrune, PruneFiles and PruneJobs directives.

If this directive is specified (not normally) and the value is no, it will override the value specified in all the Client and the Pool resources. The default is yes.

If you set AutoPrune = no, pruning will not be done automatically, and your Catalog will grow in size each time you run a Job. Pruning affects only information in the catalog and not data stored in the backup archives (on Volumes). The prune bconsole command can be used to prune catalog records respecting the Client and/or the Pool FileRetention, JobRetention and VolumeRetention directives.

- VerId = <string>

- where <string> is an identifier which can be used for support purpose. This string is displayed using the version command.

- MaximumConsoleConnections = <number>

- where <number> is the maximum number of Console Connections that could run concurrently. The default is set to 20, but you may set it to a larger number.

- MaximumReloadRequests = <number>

-

Where <number> is the maximum number of reload command that can be queued while jobs are running. The default is set to 32 and is usually sufficient.

- CommCompression = <yes|no>

-

If the two Bacula components (DIR, FD, SD, bconsole) have the comm line compression enabled, the line compression will be enabled. The default value is yes.

In many cases, the volume of data transmitted across the communications line can be reduced by a factor of three when this directive is enabled. In the case that the compression is not effective, Bacula turns it off on a record by record basis.

If you are backing up data that is already compressed the comm line compression will not be effective, and you are likely to end up with an average compression ratio that is very small. In this case, Bacula reports None in the Job report.

- TLS Enable = <yes|no>

-

Enable TLS support. If TLS is not enabled, none of the other TLS directives have any effect. In other words, even if you set TLS Require = yes you need to have TLS enabled or TLS will not be used.

- TLS PSK Enable = <yes|no>

-

Enable or Disable automatic TLS PSK support. TLS PSK is enabled by default between all Bacula components. The Pre-Shared Key used between the programs is the Bacula password. If both TLS Enable and TLS PSK Enable are enabled, the system will use TLS certificates.

- TLS Require = <yes|no>

-

Require TLS or TLS-PSK encryption. This directive is ignored unless one of TLS Enable or TLS PSK Enable is set to yes. If TLS is not required while TLS or TLS-PSK are enabled, then the Bacula component will connect with other components either with or without TLS or TLS-PSK

If TLS or TLS-PSK is enabled and TLS is required, then the Bacula component will refuse any connection request that does not use TLS.

- TLS Authenticate = <yes|no>

- When TLS Authenticate is enabled, after doing the CRAM-MD5 authentication, Bacula will also do TLS authentication, then TLS encryption will be turned off, and the rest of the communication between the two Bacula components will be done without encryption. If TLS-PSK is used instead of the regular TLS, the encryption is turned off after the TLS-PSK authentication step.

If you want to encrypt communications data, use the normal TLS directives but do not turn on TLS Authenticate.

- TLS Certificate = <Filename>

- The full path and filename of a PEM encoded TLS certificate. It will be used as either a client or server certificate, depending on the connection direction. PEM stands for Privacy Enhanced Mail, but in this context refers to how the certificates are encoded. This format is used because PEM files are base64 encoded and hence ASCII text based rather than binary. They may also contain encrypted information.

This directive is required in a server context, but it may not be specified in a client context if TLS Verify Peer is set to no in the corresponding server context.

Example:

File Daemon configuration file (bacula-fd.conf), Director resource configuration has TLS Verify Peer = no:

Director { Name = bacula-dir Password = "password" Address = director.example.com # TLS configuration directives TLS Enable = yes TLS Require = yes TLS Verify Peer = no TLS CA Certificate File = /opt/bacula/ssl/certs/root_cert.pem TLS Certificate = /opt/bacula/ssl/certs/client1_cert.pem TLS Key = /opt/bacula/ssl/keys/client1_key.pem }Having TLS Verify Peer = no, means the File Daemon, server context, will not check Directorâs public certificate, client context. There is no need to specify TLS Certificate File neither TLS Key directives in the Client resource, director configuration file. We can have the below client configuration in bacula-dir.conf:

Client { Name = client1-fd Address = client1.example.com FDPort = 9102 Catalog = MyCatalog Password = "password" ... # TLS configuration directives TLS Enable = yes TLS Require = yes TLS CA Certificate File = /opt/bacula/ssl/certs/ca_client1_cert.pem } - TLS Key = <Filename>

- The full path and filename of a PEM encoded TLS private key. It must correspond to the TLS certificate.

- TLS Verify Peer = <yes|no>

- Verify peer certificate. Instructs server to request and verify the client's X.509 certificate. Any client certificate signed by a known-CA will be accepted. Additionally, the client's X509 certificate Common Name must meet the value of the Address directive. If the TLSAllowed CN onfiguration directive is used, the client's x509 certificate Common Name must also correspond to one of the CN specified in the TLS Allowed CN directive. This directive is valid only for a server and not in client context. The default is yes.

- TLS Allowed CN = <string list>

- Common name attribute of allowed peer certificates. This directive is valid for a server and in a client context. If this directive is specified, the peer certificate will be verified against this list. In the case this directive is configured on a server side, the allowed CN list will not be checked if TLS Verify Peer is set to no (TLS Verify Peer is yes by default). This can be used to ensure that only the CN-approved component may connect. This directive may be specified more than once.

In the case this directive is configured in a server side, the allowed CN list will only be checked if TLS Verify Peer = yes (default). For example, in bacula-fd.conf, Director resource definition:

Director { Name = bacula-dir Password = "password" Address = director.example.com # TLS configuration directives TLS Enable = yes TLS Require = yes # if TLS Verify Peer = no, then TLS Allowed CN will not be checked. TLS Verify Peer = yes TLS Allowed CN = director.example.com TLS CA Certificate File = /opt/bacula/ssl/certs/root_cert.pem TLS Certificate = /opt/bacula/ssl/certs/client1_cert.pem TLS Key = /opt/bacula/ssl/keys/client1_key.pem }In the case this directive is configured in a client side, the allowed CN list will always be checked.

Client { Name = client1-fd Address = client1.example.com FDPort = 9102 Catalog = MyCatalog Password = "password" ... # TLS configuration directives TLS Enable = yes TLS Require = yes # the Allowed CN will be checked for this client by director # the client's certificate Common Name must match any of # the values of the Allowed CN list TLS Allowed CN = client1.example.com TLS CA Certificate File = /opt/bacula/ssl/certs/ca_client1_cert.pem TLS Certificate = /opt/bacula/ssl/certs/director_cert.pem TLS Key = /opt/bacula/ssl/keys/director_key.pem }If the client doesnât provide a certificate with a Common Name that meets any value in the TLS Allowed CN list, an error message will be issued:

16-Nov 17:30 bacula-dir JobId 0: Fatal error: bnet.c:273 TLS certificate verification failed. Peer certificate did not match a required commonName 16-Nov 17:30 bacula-dir JobId 0: Fatal error: TLS negotiation failed with FD at "192.168.100.2:9102".

- TLS CA Certificate File = <Filename>

- The full path and filename specifying a PEM encoded TLS CA certificate(s). Multiple certificates are permitted in the file. One of TLS CA Certificate File or TLS CA Certificate Dir are required in a server context, unless TLS Verify Peer (see above) is set to no, and are always required in a client context.

- TLS CA Certificate Dir = <Directory>

- Full path to TLS CA certificate directory. In the current implementation, certificates must be stored PEM encoded with OpenSSL-compatible hashes, which is the subject name's hash and an extension of .0. One of TLS CA Certificate File or TLS CA Certificate Dir are required in a server context, unless TLS Verify Peer is set to no, and are always required in a client context.

- TLS DH File = <Directory>

- Path to PEM encoded Diffie-Hellman parameter file. If this directive is specified, DH key exchange will be used for the ephemeral keying, allowing for forward secrecy of communications. DH key exchange adds an additional level of security because the key used for encryption/decryption by the server and the client is computed on each end and thus is never passed over the network if Diffie-Hellman key exchange is used. Even if DH key exchange is not used, the encryption/decryption key is always passed encrypted. This directive is only valid within a server context.

To generate the parameter file, you may use openssl:

openssl dhparam -out dh4096.pem -5 4096

The following is an example of a valid Director resource definition:

Director {

Name = HeadMan

WorkingDirectory = "$HOME/bacula/bin/working"

Password = UA_password

PidDirectory = "$HOME/bacula/bin/working"

QueryFile = "$HOME/bacula/bin/query.sql"

Messages = Standard

}

The Job Resource

The Job resource defines a Job (Backup, Restore, ...) that Bacula must perform. Each Job resource definition contains the name of a Client and a FileSet to backup, the Schedule for the Job, where the data are to be stored, and what media Pool can be used. In effect, each Job resource must specify What, Where, How, and When or FileSet, Storage, Backup/Restore/Level, and Schedule respectively. Note, the FileSet must be specified for a restore job for historical reasons, but it is no longer used.

Only a single type (Backup, Restore, ...) can be specified for any job. If you want to backup multiple FileSets on the same Client or multiple Clients, you must define a Job for each one.

Note, you define only a single Job to do the Full, Differential, and Incremental backups since the different backup levels are tied together by a unique Job name. Normally, you will have only one Job per Client, but if a client has a really huge number of files (more than several million), you might want to split it into to Jobs each with a different FileSet covering only part of the total files.

Multiple Storage daemons are not currently supported for Jobs, so if you do want to use multiple storage daemons, you will need to create a different Job and ensure that for each Job that the combination of Client and FileSet are unique. The Client and FileSet are what Bacula uses to restore a client, so if there are multiple Jobs with the same Client and FileSet or multiple Storage daemons that are used, the restore will not work. This problem can be resolved by defining multiple FileSet definitions (the names must be different, but the contents of the FileSets may be the same).

- Job

- Start of the Job resource. At least one Job resource is required.

- Name = <name>

- The Job name. This name can be specified on the run command in the console program to start a job. If the name contains spaces, it must be specified between quotes. It is generally a good idea to give your job the same name as the Client that it will backup. This permits easy identification of jobs.

When the job actually runs, the unique Job Name will consist of the name you specify here followed by the date and time the job was scheduled for execution. This directive is required.

- Enabled = <yes|no>

- This directive allows you to enable or disable a Job resource. When the resource of the Job is disabled, the Job will no longer be scheduled and it will not be available in the list of Jobs to be run. To be able to use the Job you must enable it.

- Tag = <string, string2, string3>

- The Tag directive specifies a list of tags to create when creating a new Job record. This directive is optional.

- Type = <job-type>

- The Type directive specifies the Job type, which may be one of the following: Backup, Restore, Verify, or Admin. This directive is required. Within a particular Job Type, there are also Levels as discussed in the next item.

- Backup

- Run a backup Job. Normally you will have at least one Backup job for each client you want to save. Normally, unless you turn off cataloging, most all the important statistics and data concerning files backed up will be placed in the catalog.

- Restore

- Run a restore Job. Normally, you will specify only one Restore job which acts as a sort of prototype that you will modify using the console program in order to perform restores. Although certain basic information from a Restore job is saved in the catalog, it is very minimal compared to the information stored for a Backup job - for example, no File database entries are generated since no Files are saved.

Restore jobs cannot be automatically started by the scheduler as is the case for Backup, Verify and Admin jobs. To restore files, you must use the restore command in the console.

- Verify

- Run a Verify Job. In general, Verify jobs permit you to compare the contents of the catalog to the file system, or to what was backed up. In addition, to verifying that a tape that was written can be read, you can also use Verify as a sort of tripwire intrusion detection.

- Admin

Run an Admin Job. Only Director's runscripts will be executed. The Client is not involved in an Admin job, so features such as Client Run Before Job are not available. Although an Admin job is recorded in the catalog, very little data is saved. An Admin job can be used to periodically run catalog pruning, if you do not want to do it at the end of each Backup Job.

- Migration

- Run a Migration Job (similar to a backup job) that reads data that was previously backed up to a Volume and writes it to another Volume. (See (here))

- Copy

- Run a Copy Job that essentially creates two identical copies of the same backup. The Copy process is essentially identical to the Migration feature with the exception that the Job that is copied is left unchanged. (See (here))

- Level = <job-level>

- The Level directive specifies the default Job level to be run. Each different Job Type (Backup, Restore, ...) has a different set of Levels that can be specified. The Level is normally overridden by a different value that is specified in the Schedule resource. This directive is not required, but must be specified either by a Level directive or as an override specified in the Schedule resource.

For a Backup Job, the Level may be one of the following:

- Full

- When the Level is set to Full all files in the FileSet whether or not they have changed will be backed up.

- Incremental

- When the Level is set to Incremental all files specified in the FileSet that have changed since the last successful backup of the the same Job using the same FileSet and Client, will be backed up. If the Director cannot find a previous valid Full backup then the job will be upgraded into a Full backup. When the Director looks for a valid backup record in the catalog database, it looks for a previous Job with:

- The same Job name.

- The same Client name.

- The same FileSet (any change to the definition of the FileSet such as adding or deleting a file in the Include or Exclude sections constitutes a different FileSet.

- The Job was a Full, Differential, or Incremental backup.

- The Job terminated normally (i.e. did not fail or was not canceled).

- The Job started no longer ago than Max Full Interval.

If all the above conditions do not hold, the Director will upgrade the Incremental to a Full save. Otherwise, the Incremental backup will be performed as requested.

The File daemon (Client) decides which files to backup for an Incremental backup by comparing start time of the prior Job (Full, Differential, or Incremental) against the time each file was last “modified” (st_mtime) and the time its attributes were last “changed”(st_ctime). If the file was modified or its attributes changed on or after this start time, it will then be backed up.

Some virus scanning software may change st_ctime while doing the scan. For example, if the virus scanning program attempts to reset the access time (st_atime), which Bacula does not use, it will cause st_ctime to change and hence Bacula will backup the file during an Incremental or Differential backup. In the case of Sophos virus scanning, you can prevent it from resetting the access time (st_atime) and hence changing st_ctime by using the

--no-reset-atime option. For other software, please see their manual.When Bacula does an Incremental backup, all modified files that are still on the system are backed up. However, any file that has been deleted since the last Full backup remains in the Bacula catalog, which means that if between a Full save and the time you do a restore, some files are deleted, those deleted files will also be restored. The deleted files will no longer appear in the catalog after doing another Full save.

In addition, if you move a directory rather than copy it, the files in it do not have their modification time (st_mtime) or their attribute change time (st_ctime) changed. As a consequence, those files will probably not be backed up by an Incremental or Differential backup which depend solely on these time stamps. If you move a directory, and wish it to be properly backed up, it is generally preferable to copy it, then delete the original.

However, to manage deleted files or directories changes in the catalog during an Incremental backup you can use accurate mode. This is quite memory consuming process. See Accurate mode for more details.

- Differential

- When the Level is set to Differential all files specified in the FileSet that have changed since the last successful Full backup of the same Job will be backed up. If the Director cannot find a valid previous Full backup for the same Job, FileSet, and Client, backup, then the Differential job will be upgraded into a Full backup. When the Director looks for a valid Full backup record in the catalog database, it looks for a previous Job with:

- The same Job name.

- The same Client name.

- The same FileSet (any change to the definition of the FileSet such as adding or deleting a file in the Include or Exclude sections constitutes a different FileSet.

- The Job was a FULL backup.

- The Job terminated normally (i.e. did not fail or was not canceled).

- The Job started no longer ago than Max Full Interval.

If all the above conditions do not hold, the Director will upgrade the Differential to a Full save. Otherwise, the Differential backup will be performed as requested.

The File daemon (Client) decides which files to backup for a differential backup by comparing the start time of the prior Full backup Job against the time each file was last “modified” (st_mtime) and the time its attributes were last “changed” (st_ctime). If the file was modified or its attributes were changed on or after this start time, it will then be backed up. The start time used is displayed after the Since on the Job report. In rare cases, using the start time of the prior backup may cause some files to be backed up twice, but it ensures that no change is missed. As with the Incremental option, you should ensure that the clocks on your server and client are synchronized or as close as possible to avoid the possibility of a file being skipped. Note, on versions 1.33 or greater Bacula automatically makes the necessary adjustments to the time between the server and the client so that the times Bacula uses are synchronized.

When Bacula does a Differential backup, all modified files that are still on the system are backed up. However, any file that has been deleted since the last Full backup remains in the Bacula catalog, which means that if between a Full save and the time you do a restore, some files are deleted, those deleted files will also be restored. The deleted files will no longer appear in the catalog after doing another Full save. However, to remove deleted files from the catalog during a Differential backup is quite a time consuming process and not currently implemented in Bacula. It is, however, a planned future feature.

As noted above, if you move a directory rather than copy it, the files in it do not have their modification time (st_mtime) or their attribute change time (st_ctime) changed. As a consequence, those files will probably not be backed up by an Incremental or Differential backup which depend solely on these time stamps. If you move a directory, and wish it to be properly backed up, it is generally preferable to copy it, then delete the original. Alternatively, you can move the directory, then use the touch program to update the timestamps.

However, to manage deleted files or directories changes in the catalog during an Differential backup you can use accurate mode. This is quite memory consuming process. See Accurate mode for more details.

Every once and a while, someone asks why we need Differential backups as long as Incremental backups pickup all changed files. There are possibly many answers to this question, but the one that is the most important for me is that a Differential backup effectively merges all the Incremental and Differential backups since the last Full backup into a single Differential backup. This has two effects:

- It gives some redundancy since the old backups could be used if the merged backup cannot be read.

- More importantly, it reduces the number of Volumes that are needed to do a restore effectively eliminating the need to read all the volumes on which the preceding Incremental and Differential backups since the last Full are done.

- VirtualFull

- When the backup Level is set to VirtualFull, Bacula will consolidate the previous Full backup plus the most recent Differential backup and any subsequent Incremental backups into a new Full backup. This new Full backup will then be considered as the most recent Full for any future Incremental or Differential backups. The VirtualFull backup is accomplished without contacting the client by reading the previous backup data and writing it to a volume in a different pool.

Bacula's virtual backup feature is often called Synthetic Backup or Consolidation in other backup products.

For a Restore Job, no level needs to be specified.

For a Verify Job, the Level may be one of the following:

- InitCatalog

- does a scan of the specified FileSet and stores the file attributes in the Catalog database. Since no file data is saved, you might ask why you would want to do this. It turns out to be a very simple and easy way to have a Tripwire like feature using Bacula. In other words, it allows you to save the state of a set of files defined by the FileSet and later check to see if those files have been modified or deleted and if any new files have been added. This can be used to detect system intrusion. Typically you would specify a FileSet that contains the set of system files that should not change (e.g. /sbin, /boot, /lib, /bin, ...). Normally, you run the InitCatalog level verify one time when your system is first setup, and then once again after each modification (upgrade) to your system. Thereafter, when your want to check the state of your system files, you use a Verify level = Catalog. This compares the results of your InitCatalog with the current state of the files.

- Catalog

- Compares the current state of the files against the state previously saved during an InitCatalog. Any discrepancies are reported. The items reported are determined by the Verify options specified on the Include directive in the specified FileSet (see the FileSet resource below for more details). Typically this command will be run once a day (or night) to check for any changes to your system files.

Please note! If you run two Verify Catalog jobs on the same client at the same time, the results will certainly be incorrect. This is because Verify Catalog modifies the Catalog database while running in order to track new files.

- Data

Read back the data stored on volumes and check data attributes such as size and the checksum of all the files.

To run the Verify job, it is possible to use the “jobid” parameter of the “run” command.

Please note, the current Verify Data implementation requires specifying the correct Storage resource in the Verify job. The Storage resource can be changed with the bconsole command line and with the menu.

It is also possible to use the accurate option to check catalog records at the same time. When using a Verify job with level=Data and accurate=yes can replace the level=VolumeToCatalog option.

To run a Verify Job with the accurate option, it is possible to set the option in the Job definition or set use the accurate=yes on the command line.

* run job=VerifyData level=Data jobid=10 accurate=yes

- VolumeToCatalog

- This level causes Bacula to read the file attribute data written to the Volume from the last backup Job for the job specified on the VerifyJob directive. The file attribute data are compared to the values saved in the Catalogind database and any differences are reported. This is similar to the DiskToCatalog level except that instead of comparing the disk file attributes to the catalog database, the attribute data written to the Volume is read and compared to the catalog database. Although the attribute data including the signatures (MD5 or SHA1) are compared, the actual file data is not compared (it is not in the catalog).

Please note! If you run two Verify VolumeToCatalog jobs on the same client at the same time, the results will certainly be incorrect. This is because the Verify VolumeToCatalog modifies the Catalog database while running.

- DiskToCatalog

- This level causes Bacula to read the files as they currently are on disk, and to compare the current file attributes with the attributes saved in the catalog from the last backup for the job specified on the VerifyJob directive. This level differs from the VolumeToCatalog level described above by the fact that it doesn't compare against a previous Verify job but against a previous backup. When you run this level, you must supply the verify options on your Include statements. Those options determine what attribute fields are compared.

This command can be very useful if you have disk problems because it will compare the current state of your disk against the last successful backup, which may be several jobs.

Note, the current implementation (1.32c) does not identify files that have been deleted.

- Accurate = <yes|no>

- In accurate mode, the File daemon knowns exactly which files were present after the last backup. So it is able to handle deleted or renamed files.

When restoring a FileSet for a specified date (including “most recent”), Bacula is able to restore exactly the files and directories that existed at the time of the last backup prior to that date including ensuring that deleted files are actually deleted, and renamed directories are restored properly.

In this mode, the File daemon must keep data concerning all files in memory. So If you do not have sufficient memory, the backup may either be terribly slow or fail.

For 500.000 files (a typical desktop linux system), it will require approximately 64 Megabytes of RAM on your File daemon to hold the required information.

- Verify Job = <Job-Resource-Name>

- If you run a verify job without this directive, the last job run will be compared with the catalog, which means that you must immediately follow a backup by a verify command. If you specify a Verify Job Bacula will find the last job with that name that ran. This permits you to run all your backups, then run Verify jobs on those that you wish to be verified (most often a VolumeToCatalog) so that the tape just written is re-read.

- JobDefs = <JobDefs-Resource-Name>

- If a <JobDefs-Resource-Name> is specified, all the values contained in the named JobDefs resource will be used as the defaults for the current Job. Any value that you explicitly define in the current Job resource, will override any defaults specified in the JobDefs resource. The use of this directive permits writing much more compact Job resources where the bulk of the directives are defined in one or more JobDefs. This is particularly useful if you have many similar Jobs but with minor variations such as different Clients. A simple example of the use of JobDefs is provided in the default bacula-dir.conf file.

- Bootstrap = <bootstrap-file>

- The Bootstrap directive specifies a bootstrap file that, if provided, will be used during Restore Jobs and is ignored in other Job types. The <bootstrap-file> contains the list of tapes to be used in a Restore Job as well as which files are to be restored. Specification of this directive is optional, and if specified, it is used only for a restore job. In addition, when running a Restore job from the console, this value can be changed.

If you use the restore command in the bconsole program, to start a Restore job, the <bootstrap-file> will be created automatically from the files you select to be restored.

For additional details of the bootstrap directive, please see Restoring Files with the Bootstrap File chapter of this manual.

- Write Bootstrap = <bootstrap-file-specification>

- The writebootstrap directive specifies a file name where Bacula will write a bootstrap file for each Backup job run. This directive applies only to Backup Jobs. If the Backup job is a Full save, Bacula will erase any current contents of the specified file before writing the bootstrap records. If the Job is an Incremental or Differential save, Bacula will append the current bootstrap record to the end of the file.

Using this feature, permits you to constantly have a bootstrap file that can recover the current state of your system. Normally, the file specified should be a mounted drive on another machine, so that if your hard disk is lost, you will immediately have a bootstrap record available. Alternatively, you should copy the bootstrap file to another machine after it is updated. Note, it is a good idea to write a separate bootstrap file for each Job backed up including the job that backs up your catalog database.

If the <bootstrap-file-specification> begins with a vertical bar (|), Bacula will use the specification as the name of a program to which it will pipe the bootstrap record. It could for example be a shell script that emails you the bootstrap record.

On versions 1.39.22 or greater, before opening the file or executing the specified command, Bacula performs character substitution like in RunScript directive. To automatically manage your bootstrap files, you can use this in your JobDefs resources:

JobDefs { Write Bootstrap = "%c_%n.bsr" ... }For more details on using this file, please see the chapter entitled The Bootstrap File of this manual.

- Client = <client-resource-name>

- The Client directive specifies the Client (File daemon) that will be used in the current Job. Only a single Client may be specified in any one Job. The Client runs on the machine to be backed up, and sends the requested files to the Storage daemon for backup, or receives them when restoring. For additional details, see the Client Resource section of this chapter. This directive is required.

- FileSet = <FileSet-resource-name>

- The FileSet directive specifies the FileSet that will be used in the current Job. The FileSet specifies which directories (or files) are to be backed up, and what options to use (e.g. compression, ...). Only a single FileSet resource may be specified in any one Job. For additional details, see the FileSet Resource section of this chapter. This directive is required.

- Base = <job-resource-name, ...>

- The Base directive permits to specify the list of jobs that will be used during Full backup as base. This directive is optional. See the Base Job chapter for more information.

- Messages = <messages-resource-name>

- The Messages directive defines what Messages resource should be used for this job, and thus how and where the various messages are to be delivered. For example, you can direct some messages to a log file, and others can be sent by email. For additional details, see the Messages Resource Chapter of this manual. This directive is required.

- Snapshot Retention = <time-period-specification>

-

The Snapshot Retention directive defines the length of time that Bacula will keep Snapshots in the Catalog database and on the Client after the Snapshot creation. When this time period expires, and if using the snapshot prune command, Bacula will prune (remove) Snapshot records that are older than the specified Snapshot Retention period and will contact the FileDaemon to delete Snapshots from the system.

The Snapshot retention period is specified as seconds, minutes, hours, days, weeks, months, quarters, or years. See the Configuration chapter of this manual for additional details of time specification.

The default is 0 seconds, Snapshots are deleted at the end of the backup. The Job SnapshotRetention directive overwrites the Client SnapshotRetention directive.

- Pool = <pool-resource-name>

- The Pool directive defines the pool of Volumes where your data can be backed up. Many Bacula installations will use only the Default pool. However, if you want to specify a different set of Volumes for different Clients or different Jobs, you will probably want to use Pools. For additional details, see the Pool Resource section of this chapter. This directive is required.

- Full Backup Pool = <pool-resource-name>

- The Full Backup Pool specifies a Pool to be used for Full backups. It will override any Pool specification during a Full backup. This directive is optional.

- Differential Backup Pool = <pool-resource-name>

- The Differential Backup Pool specifies a Pool to be used for Differential backups. It will override any Pool specification during a Differential backup. This directive is optional.

- Incremental Backup Pool = <pool-resource-name>

- The Incremental Backup Pool specifies a Pool to be used for Incremental backups. It will override any Pool specification during an Incremental backup. This directive is optional.

- VirtualFull Backup Pool = <pool-resource-name>

- The VirtualFull Backup Pool specifies a Pool to be used for VirtualFull backups. It will override any Pool specification during an VirtualFull backup. This directive is optional.

- BackupsToKeep = <number>

-

When this directive is present during a Virtual Full (it is ignored for other Job types), it will look for a Full backup that has more subsequent backups than the value specified. In the example below, the Job will simply terminate unless there is a Full back followed by at least 31 backups of either level Differential or Incremental.

Job { Name = "VFull" Type = Backup Level = VirtualFull Client = "my-fd" File Set = "FullSet" Accurate = Yes Backups To Keep = 30 }Assuming that the last Full backup is followed by 32 Incremental backups, a Virtual Full will be run that consolidates the Full with the first two Incrementals that were run after the Full. The result is that you will end up with a Full followed by 30 Incremental backups.

- DeleteConsolidatedJobs = <yes/no>

-

If set to yes, it will cause any old Job that is consolidated during a Virtual Full to be deleted. In the example above we saw that a Full plus one other job (either an Incremental or Differential) were consolidated into a new Full backup. The original Full plus the other Job consolidated will be deleted. The default value is no.

- Schedule = <schedule-name>

- The Schedule directive defines what schedule is to be used for the Job. The schedule in turn determines when the Job will be automatically started and what Job level (i.e. Full, Incremental, ...) is to be run. This directive is optional, and if left out, the Job can only be started manually using the Console program. Although you may specify only a single Schedule resource for any one job, the Schedule resource may contain multiple Run directives, which allow you to run the Job at many different times, and each Run directive permits overriding the default Job Level Pool, Storage, and Messages resources. This gives considerable flexibility in what can be done with a single Job. For additional details, see the Schedule Resource chapter of this manual.

- Storage = <storage-resource-name>

- The Storage directive defines the name of the storage services where you want to backup the FileSet data. For additional details, see the Storage Resource Chapter of this manual. The Storage resource may also be specified in the Job's Pool resource, in which case the value in the Pool resource overrides any value in the Job. This Storage resource definition is not required by either the Job resource or in the Pool, but it must be specified in one or the other, if not an error will result. Storage can be either specified by an single item or it can be specified as a comma separated list of storages to use according to StorageGroupPolicy. If some number of first storage daemons on the list are unavailable due to network problems, broken or unreachable for some other reason, Bacula will take first available one from the list (which is sorted according to the policy used) which is network reachable and healthy.

- StorageGroupPolicy = <Storage Group Policy Name>

- Storage Group Policy determines how Storage resources (from the 'Storage' directive) are being choosen from the Storage list. If no StoragePolicy is specified Bacula always tries to use first available Storage from the provided list. Currently supported policies are:

- ListedOrder

- - This is the default policy, which uses first available storage from the list provided

- LeastUsed

- - This policy scans all storage daemons from the list and chooses the one with the least number of jobs being currently run

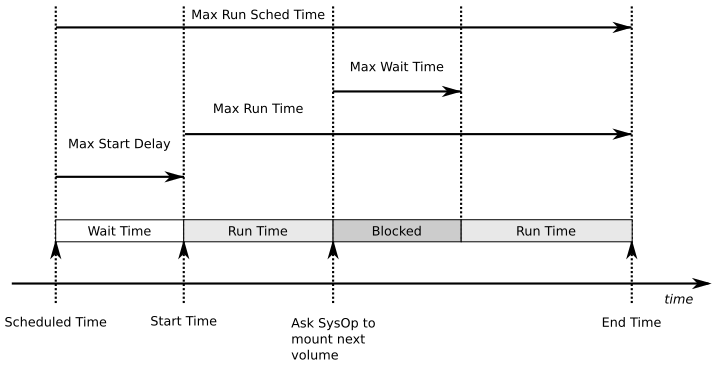

- Max Start Delay = <time>

- The time specifies the maximum delay between the scheduled time and the actual start time for the Job. For example, a job can be scheduled to run at 1:00am, but because other jobs are running, it may wait to run. If the delay is set to 3600 (one hour) and the job has not begun to run by 2:00am, the job will be canceled. This can be useful, for example, to prevent jobs from running during day time hours. The default is 0 which indicates no limit.

- Max Run Time = <time>

- The time specifies the maximum allowed time that a job may run, counted from when the job starts, (not necessarily the same as when the job was scheduled).

By default, the the watchdog thread will kill any Job that has run more than 200 days. The maximum watchdog timeout is independent of MaxRunTime and cannot be changed.

- Incremental|Differential Max Wait Time = <time>

- Theses directives have been deprecated in favor of Incremental|Differential Max Run Time since bacula 2.3.18.

- Incremental Max Run Time = <time>

- The time specifies the maximum allowed time that an Incremental backup job may run, counted from when the job starts, (not necessarily the same as when the job was scheduled).

- Differential Max Run Time = <time>

- The time specifies the maximum allowed time that a Differential backup job may run, counted from when the job starts, (not necessarily the same as when the job was scheduled).

- Max Run Sched Time = <time>

-

The time specifies the maximum allowed time that a job may run, counted from when the job was scheduled. This can be useful to prevent jobs from running during working hours. We can see it like Max Start Delay + Max Run Time.

- Max Wait Time = <time>

- The time specifies the maximum allowed time that a job may block waiting for a resource (such as waiting for a tape to be mounted, or waiting for the storage or file daemons to perform their duties), counted from the when the job starts, (not necessarily the same as when the job was scheduled). This directive works as expected since bacula 2.3.18.

- Maximum Spawned Jobs = <nb>

-

The Job resource now permits specifying a number of Maximum Spawn Jobs. The default is 600. This directive can be useful if you have big hardware and you do a lot of Migration/Copy jobs which start at the same time.

- Maximum Bandwidth = <speed>

-

The speed parameter specifies the maximum allowed bandwidth in bytes that a job may use. You may specify the following speed parameter modifiers: kb/s (1,000 bytes per second), k/s (1,024 bytes per second), mb/s (1,000,000 bytes per second), or m/s (1,048,576 bytes per second).

The use of TLS, TLS PSK, CommLine compression and Deduplication can interfer with the value set with the Directive.

- Max Full Interval = <time>

- The time specifies the maximum allowed age (counting from start time) of the most recent successful Full backup that is required in order to run Incremental or Differential backup jobs. If the most recent Full backup is older than this interval, Incremental and Differential backups will be upgraded to Full backups automatically. If this directive is not present, or specified as 0, then the age of the previous Full backup is not considered.

- Max VirtualFull Interval = <time>

-

The time specifies the maximum allowed age (counting from start time) of the most recent successful Full backup that is required in order to run Incremental, Differential or Full backup jobs. If the most recent Full backup is older than this interval, Incremental, Differential and Full backups will be converted to a VirtualFull backup automatically. If this directive is not present, or specified as 0, then the age of the previous Full backup is not considered.

Please note that a VirtualFull job is not a real backup job. A VirtualFull will merge exiting jobs to create a new virtual Full job in the catalog and will copy the exiting data to new volumes.

The Client is not used in a VirtualFull job, so when using this directive, the Job that was supposed to run and save recently modified data on the Client will not run. Only the next regular Job defined in the Schedule will backup the data. It will not be possible to restore the data that was modified on the Client between the last Incremental/Differential and the VirtualFull.

- Prefer Mounted Volumes = <yes|no>

- If the Prefer Mounted Volumes directive is set to yes (default yes), the Storage daemon is requested to select either an Autochanger or a drive with a valid Volume already mounted in preference to a drive that is not ready. This means that all jobs will attempt to append to the same Volume (providing the Volume is appropriate - right Pool, ... for that job), unless you are using multiple pools. If no drive with a suitable Volume is available, it will select the first available drive. Note, any Volume that has been requested to be mounted, will be considered valid as a mounted volume by another job. This if multiple jobs start at the same time and they all prefer mounted volumes, the first job will request the mount, and the other jobs will use the same volume.

If the directive is set to no, the Storage daemon will prefer finding an unused drive, otherwise, each job started will append to the same Volume (assuming the Pool is the same for all jobs). Setting Prefer Mounted Volumes to no can be useful for those sites with multiple drive autochangers that prefer to maximize backup throughput at the expense of using additional drives and Volumes. This means that the job will prefer to use an unused drive rather than use a drive that is already in use.

Despite the above, we recommend against setting this directive to no since it tends to add a lot of swapping of Volumes between the different drives and can easily lead to deadlock situations in the Storage daemon. We will accept bug reports against it, but we cannot guarantee that we will be able to fix the problem in a reasonable time.

A better alternative for using multiple drives is to use multiple pools so that Bacula will be forced to mount Volumes from those Pools on different drives.

- Prune Jobs = <yes|no>

- Normally, pruning of Jobs from the Catalog is specified on a Client by Client basis in the Client resource with the AutoPrune directive. If this directive is specified (not normally) and the value is yes, it will override the value specified in the Client resource. The default is no.

- Prune Files = <yes|no>

- Normally, pruning of Files from the Catalog is specified on a Client by Client basis in the Client resource with the AutoPrune directive. If this directive is specified (not normally) and the value is yes, it will override the value specified in the Client resource. The default is no.

- Prune Volumes = <yes|no>

- Normally, pruning of Volumes from the Catalog is specified on a Pool by Pool basis in the Pool resource with the AutoPrune directive. Note, this is different from File and Job pruning which is done on a Client by Client basis. If this directive is specified (not normally) and the value is yes, it will override the value specified in the Pool resource. The default is no.

- RunScript {<body-of-runscript>}

-

The RunScript directive behaves like a resource in that it requires opening and closing braces around a number of directives that make up the body of the runscript.

The specified Command (see below for details) is run as an external program prior or after the current Job. This is optional. By default, the program is executed on the Client side like in ClientRunXXXJob.

Console options are special commands that are sent to the director instead of the OS. At this time, console command ouputs are redirected to log with the jobid 0.

You can use following console command : delete, disable, enable, estimate, list, llist, memory, prune, purge, reload, status, setdebug, show, time, trace, update, version, .client, .jobs, .pool, .storage. See console chapter for more information. You need to specify needed information on command line, nothing will be prompted. Example :

Console = "prune files client=%c" Console = "update stats age=3"

You can specify more than one Command/Console option per RunScript.

You can use following options may be specified in the body of the runscript:

Options for Run Script Options Value Default Information Runs On Success Yes / No Yes Run command if JobStatus is successful Runs On Failure Yes / No No Run command if JobStatus isn't successful Runs On Client Yes / No Yes Run command on clientnoteScripts will run on Client only with Jobs that use a Client. (Backup, Restore, some Verify jobs). For other Jobs (Copy, Migration, Admin, ...) RunsOnClient should be set to No. Runs When Before After Always AfterVSS Never When run commands Fail Job On Error Yes/No Yes Fail job if script returns something different from 0 Command Path to your script Console Console command Any output sent by the command to standard output will be included in the Bacula job report. The command string must be a valid program name or name of a shell script.

In addition, the command string is parsed then fed to the OS, which means that the path will be searched to execute your specified command, but there is no shell interpretation, as a consequence, if you invoke complicated commands or want any shell features such as redirection or piping, you must call a shell script and do it inside that script.

Before submitting the specified command to the operating system, Bacula performs character substitution of the following characters:

%% = % %b = Job Bytes %c = Client's name %C = If the job is a Cloned job (Only on director side) %d = Daemon's name (Such as host-dir or host-fd) %D = Director's name (Also valid on file daemon) %e = Job Exit Status %E = Non-fatal Job Errors %f = Job FileSet (Only on director side) %F = Job Files %h = Client address %i = JobId %I = Migration/Copy JobId (Only in Copy/Migrate Jobs) %j = Unique Job id %l = Job Level %n = Job name %o = Job Priority %p = Pool name (Only on director side) %P = Current PID process %R = Read Bytes %s = Since time %S = Previous Job name (Only on file daemon side) %t = Job type (Backup, ...) %v = Volume name (Only on director side) %w = Storage name (Only on director side) %x = Spooling enabled? ("yes" or "no")Some character substitutions are not available in all situations. The Job Exit Status code %e edits the following values:

- OK

- Error

- Fatal Error

- Canceled

- Differences

- Unknown term code

Thus if you edit it on a command line, you will need to enclose it within some sort of quotes.

You can use these following shortcuts:

Examples:RunScript shortcuts Runs Runs FailJob Runs Runs Keyword On On On On When Success Failure Error Client Run Before Job Yes No Before Run After Job Yes No No After Run After Failed Job No Yes No After Client Run Before Job Yes Yes Before Client Run After Job Yes No Yes After RunScript { RunsWhen = Before FailJobOnError = No Command = "/etc/init.d/apache stop" } RunScript { RunsWhen = After RunsOnFailure = yes Command = "/etc/init.d/apache start" }Notes about ClientRunBeforeJob

For compatibility reasons, with this shortcut, the command is executed directly when the client recieve it. And if the command is in error, other remote runscripts will be discarded. To be sure that all commands will be sent and executed, you have to use RunScript syntax.

Special Shell Considerations

A “Command =” can be one of:

- The full path to an executable program

- The name of an executable program that can be found in the $PATH

- A complex shell command in the form of: "sh -c \"your commands go here\""

You can run scripts just after snapshots initializations with AfterVSS keyword.

In addition, for a Windows client, please take note that you must ensure a correct path to your script. The script or program can be a .com, .exe or a .bat file. If you just put the program name in then Bacula will search using the same rules that cmd.exe uses (current directory, Bacula bin directory, and PATH). It will even try the different extensions in the same order as cmd.exe. The command can be anything that cmd.exe or command.com will recognize as an executable file.

However, if you have slashes in the program name then Bacula figures you are fully specifying the name, so you must also explicitly add the three character extension.

The command is run in a Win32 environment, so Unix like commands will not work unless you have installed and properly configured Cygwin in addition to and separately from Bacula.

The System %Path% will be searched for the command. (under the environment variable dialog you have have both System Environment and User Environment, we believe that only the System environment will be available to bacula-fd, if it is running as a service.)

System environment variables can be referenced with %var% and used as either part of the command name or arguments.

So if you have a script in the Bacula \bin directory then the following lines should work fine:

Client Run Before Job = systemstate or Client Run Before Job = systemstate.bat or Client Run Before Job = "systemstate" or Client Run Before Job = "systemstate.bat" or ClientRunBeforeJob = "\"C:/Program Files/Bacula/systemstate.bat\""The outer set of quotes is removed when the configuration file is parsed. You need to escape the inner quotes so that they are there when the code that parses the command line for execution runs so it can tell what the program name is.

ClientRunBeforeJob = "\"C:/Program Files/Software Vendor/Executable\" /arg1 /arg2 \"foo bar\""The special characters

&<>()@^|

will need to be quoted, if they are part of a filename or argument.If someone is logged in, a blank “command” window running the commands will be present during the execution of the command.

Some Suggestions from Phil Stracchino for running on Win32 machines with the native Win32 File daemon:

- You might want the ClientRunBeforeJob directive to specify a .bat file which runs the actual client-side commands, rather than trying to run (for example) regedit /e directly.

- The batch file should explicitly “exit 0” on successful completion.

- The path to the batch file should be specified in Unix form:

ClientRunBeforeJob = "c:/bacula/bin/systemstate.bat"

rather than DOS/Windows form:ClientRunBeforeJob = "c:\bacula\bin\systemstate.bat" # INCORRECT

For Win32, please note that there are certain limitations:

ClientRunBeforeJob = "C:/Program Files/Bacula/bin/pre-exec.bat"

Lines like the above do not work because there are limitations of cmd.exe that is used to execute the command. Bacula prefixes the string you supply with cmd.exe /c . To test that your command works you should type cmd /c "C:/Program Files/test.exe" at a cmd prompt and see what happens. Once the command is correct insert a backslash (\) before each double quote ("), and then put quotes around the whole thing when putting it in the director's configuration file. You either need to have only one set of quotes or else use the short name and don't put quotes around the command path.

Below is the output from cmd's help as it relates to the command line passed to the /c option.

If /C or /K is specified, then the remainder of the command line after the switch is processed as a command line, where the following logic is used to process quote (") characters:

- If all of the following conditions are met, then quote characters on the command line are preserved:

- no /S switch.

- exactly two quote characters.

- no special characters between the two quote characters, where special is one of:

&<>()@^|

- there are one or more whitespace characters between the the two quote characters.

- the string between the two quote characters is the name of an executable file.

- Otherwise, old behavior is to see if the first character is a quote character and if so, strip the leading character and remove the last quote character on the command line, preserving any text after the last quote character.

The following example of the use of the Client Run Before Job directive was submitted by a user:

You could write a shell script to back up a DB2 database to a FIFO. The shell script is:

#!/bin/sh # ===== backupdb.sh DIR=/u01/mercuryd mkfifo $DIR/dbpipe db2 BACKUP DATABASE mercuryd TO $DIR/dbpipe WITHOUT PROMPTING & sleep 1

The following line in the Job resource in the bacula-dir.conf file:

Client Run Before Job = "su - mercuryd -c \"/u01/mercuryd/backupdb.sh '%t' '%l'\""

When the job is run, you will get messages from the output of the script stating that the backup has started. Even though the command being run is backgrounded with &, the job will block until the db2 BACKUP DATABASE command, thus the backup stalls.

To remedy this situation, the “db2 BACKUP DATABASE” line should be changed to the following:

db2 BACKUP DATABASE mercuryd TO $DIR/dbpipe WITHOUT PROMPTING > $DIR/backup.log 2>&1 < /dev/null &

It is important to redirect the input and outputs of a backgrounded command to /dev/null to prevent the script from blocking.

- Run Before Job = <command>

- The specified <command> is run as an external program prior to running the current Job. This directive is not required, but if it is defined, and if the exit code of the program run is non-zero, the current Bacula job will be canceled.

Run Before Job = "echo test"

it's equivalent to :RunScript { Command = "echo test" RunsOnClient = No RunsWhen = Before }Lutz Kittler has pointed out that using the RunBeforeJob directive can be a simple way to modify your schedules during a holiday. For example, suppose that you normally do Full backups on Fridays, but Thursday and Friday are holidays. To avoid having to change tapes between Thursday and Friday when no one is in the office, you can create a RunBeforeJob that returns a non-zero status on Thursday and zero on all other days. That way, the Thursday job will not run, and on Friday the tape you inserted on Wednesday before leaving will be used.

- Run After Job = <Command>

- The specified <Command> is run as an external program if the current job terminates normally (without error or without being canceled). This directive is not required. If the exit code of the program run is non-zero, Bacula will print a warning message. Before submitting the specified command to the operating system, Bacula performs character substitution as described above for the RunScript directive.

An example of the use of this directive is given in the Tips chapter of the Bacula Enterprise Problems Resolution guide.

See the Run After Failed Job if you want to run a script after the job has terminated with any non-normal status.

- Run After Failed Job = <Command>

- The specified <Command> is run as an external program after the current job terminates with any error status. This directive is not required. The command string must be a valid program name or name of a shell script. If the exit code of the program run is non-zero, Bacula will print a warning message. Before submitting the specified command to the operating system, Bacula performs character substitution as described above for the RunScript directive. Note, if you wish that your script will run regardless of the exit status of the Job, you can use this :

RunScript { Command = "echo test" RunsWhen = After RunsOnFailure = yes RunsOnClient = no RunsOnSuccess = yes # default, you can drop this line }An example of the use of this directive is given in the Tips chapter of the Bacula Enterprise Problems Resolution guide.

- Client Run Before Job = <Command>

-

This directive is the same as Run Before Job except that the program is run on the client machine. The same restrictions apply to Unix systems as noted above for the RunScript. ClientRunBeforeJob can be used with Backup and Restore jobs.

- Client Run After Job = <Command>

- The specified <Command> is run on the client machine as soon as data spooling is complete in order to allow restarting applications on the client as soon as possible. ClientRunBeforeJob can be used with Backup and Restore jobs.

Note, please see the notes above in RunScript concerning Windows clients.

- Rerun Failed Levels = <yes|no>

- If this directive is set to yes (default no), and Bacula detects that a previous job at a higher level (i.e. Full or Differential) has failed, the current job level will be upgraded to the higher level. This is particularly useful for Laptops where they may often be unreachable, and if a prior Full save has failed, you wish the very next backup to be a Full save rather than whatever level it is started as.

There are several points that must be taken into account when using this directive: first, a failed job is defined as one that has not terminated normally, which includes any running job of the same name (you need to ensure that two jobs of the same name do not run simultaneously); secondly, the Ignore FileSet Changes directive is not considered when checking for failed levels, which means that any FileSet change will trigger a rerun.

- Spool Data = <yes|no>

-

If this directive is set to yes (default no), the Storage daemon will be requested to spool the data for this Job to disk rather than write it directly to the Volume (normally a tape).

Thus the data is written in large blocks to the Volume rather than small blocks. This directive is particularly useful when running multiple simultaneous backups to tape. Once all the data arrives or the spool files' maximum sizes are reached, the data will be despooled and written to tape.

Spooling data prevents interleaving date from several job and reduces or eliminates tape drive stop and start commonly known as “shoe-shine”.

We don't recommend using this option if you are writing to a disk file using this option will probably just slow down the backup jobs.

NOTE: When this directive is set to yes, Spool Attributes is also automatically set to yes.

- Spool Attributes = <yes|no>

-

The default is set to yes, the Storage daemon will buffer the File attributes and Storage coordinates to a temporary file in the Working Directory, then when writing the Job data to the tape is completed, the attributes and storage coordinates will be sent to the Director. If set to no the File attributes are sent by the Storage daemon to the Director as they are stored on tape.

NOTE: When Spool Data is set to yes, Spool Attributes is also automatically set to yes.

- SpoolSize=bytes

- where the bytes specify the maximum spool size for this job. The default is take from Device Maximum Spool Size limit. This directive is available only in Bacula version 2.3.5 or later.

- Where = <directory>

- This directive applies only to a Restore job and specifies a prefix to the directory name of all files being restored. This permits files to be restored in a different location from which they were saved. If Where is not specified or is set to slash (/), the files will be restored to their original location. By default, we have set Where in the example configuration files to be /tmp/bacula-restores. This is to prevent accidental overwriting of your files.

- Add Prefix = <directory>

- This directive applies only to a Restore job and specifies a prefix to the directory name of all files being restored. This will use File Relocation feature implemented in Bacula 2.1.8 or later.

- Add Suffix = <extention>

- This directive applies only to a Restore job and specifies a suffix to all files being restored. This will use File Relocation feature implemented in Bacula 2.1.8 or later.

Using Add Suffix=.old, /etc/passwd will be restored to /etc/passwsd.old

- Strip Prefix = <directory>

- This directive applies only to a Restore job and specifies a prefix to remove from the directory name of all files being restored. This will use the File Relocation feature implemented in Bacula 2.1.8 or later.

Using Strip Prefix=/etc, /etc/passwd will be restored to /passwd

Under Windows, if you want to restore c:/files to d:/files, you can use :

Strip Prefix = c: Add Prefix = d:

- RegexWhere = <expressions>

- This directive applies only to a Restore job and specifies a regex filename manipulation of all files being restored. This will use File Relocation feature implemented in Bacula 2.1.8 or later.

For more informations about how use this option, see this.

- Replace = <replace-option>

- This directive applies only to a Restore job and specifies what happens when Bacula wants to restore a file or directory that already exists. You have the following options for <replace-option>:

- RestoreClient = <client-resource-name>

-

The RestoreClient directive specifies the default Client (File Daemon) that will be used with the restore job. If this directive is not set then a default restore client will be set to a backup client as usual. It is possible to define a dedicated restore job and run an automatic (scheduled) restore tests of your backups which will be redirected to the restore test Client.

- always

- when the file to be restored already exists, it is deleted and then replaced by the copy that was backed up. This is the default value.

- ifnewer

- if the backed up file (on tape) is newer than the existing file, the existing file is deleted and replaced by the back up.

- ifolder

- if the backed up file (on tape) is older than the existing file, the existing file is deleted and replaced by the back up.

- never

- if the backed up file already exists, Bacula skips restoring this file.

- Prefix Links=<yes|no>